Terminology and key issues with Machine Learning

November 7, 2015 Leave a comment

These are some of the terms which are used in machine learning algorithms.

- Training Example: An example of the form [x, f(x)]. Statisticians call it ‘Sample’. It is also called ‘Training Instance’.

- Target Function: This is the true function ‘f’, that we are trying to learn.

- Target Concept: It is a boolean function where

- f(x) = 1 are called positive instances

- f(x) = 0 are called negative instances

- Hypothesis: In every algorithm we will try to come up with some hypothesis space which is close to target function ‘f’.

- Hypothesis Space: The space of all hypothesis that can be output by a program. Version Space is a subset of this space.

- Classifier: It’s a discrete valued function.

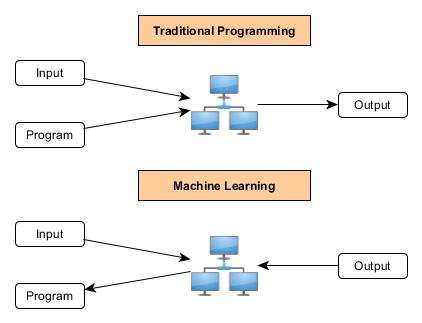

- Classifier is what a learner outputs. A learning program is a program where output is also a program.

- Once we have the classifier we replace the learning algorithm with the classifier.

- Program vs Output and Learner vs Classifier are same

Some of the notations commonly used in Machine Learning related white papers

Some of the key issues with machine learning algos

- What is a good hypothesis space? Is past data good enough?

- What algorithms fit to what spaces? Different spaces need different algorithms

- How can we optimize the accuracy of future data points? (this is also called as ‘Problem of Overfitting‘)

- How to select the features from the training examples? (this is also called ‘Curse of Dimentionality‘)

- How can we have confidence in results? How much training data is required to find accurate hypothesis (it’s a statistics question)

- Are learning problems computationally intractable? (Is the solution scalable)

- Engineering problem? (how to formulate application problems into ML problems)

Note: Problem of Overfitting and Curse of Dimentionality will be there with most of the real time problems, we will look into each of these problems while studying individual algorithms.

REFERENCES

http://www.cs.waikato.ac.nz/Teaching/COMP317B/Week_1/AlgorithmDesignTechniques.pdf

Recent Comments