Hadoop and Spark Installation on Raspberry Pi-3 Cluster – Part-4

February 16, 2017 Leave a comment

In this part we will see the configuration of Slave Node. Here are the steps

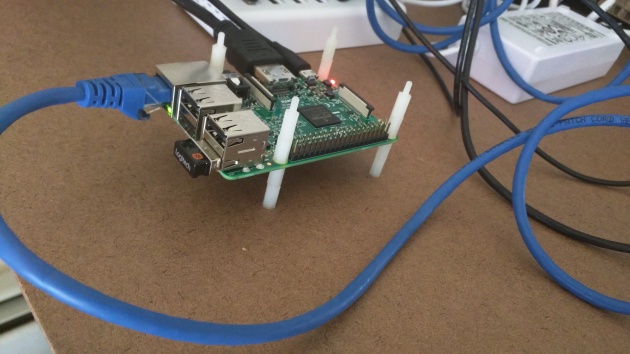

- Mount second Raspberry Pi-3 device on the nylon standoffs (on top of Master Node)

- Load the image from part2 into a sd_card

- Insert the sd_card into one Raspberry Pi-3 (RPI) device

- Connect RPI to the keyboard via USB port

- Connect to monitor via HDMI cable

- Connect to Ethernet switch via ethernet port

- Connect to USB switch via micro usb slot

- Hadoop related changes on Slave node

Here Steps1-7 are all physical and hence I am skipping them.

Once the device is powered on, login via external keyboard and monitor and change the hostname from rpi3-0 (which comes from base image) to rpi3-1

Step #8: Hadoop Related Configuration

- Setup HDFS

sudo mkdir -p /hdfs/tmp sudo chown hduser:hadoop /hdfs/tmp chmod 750 /hdfs/tmp hdfs namenode -format

- Update /etc/hosts file

127.0.0.1 localhost 192.168.2.1 rpi3-0 192.168.2.101 rpi3-1 192.168.2.102 rpi3-2 192.168.2.103 rpi3-3

- Repeat the above steps for each of the slave node. And for every addition of slave node, ensure

- ssh is setup from master node to slave node

- slaves file on master is updated

- /etc/hosts file on both master and slave is updated

Start the hadoop/spark cluster

- Start dfs and yarn services

cd /opt/hadoop-2.7.3/sbin start-dfs.sh start-yarn.sh

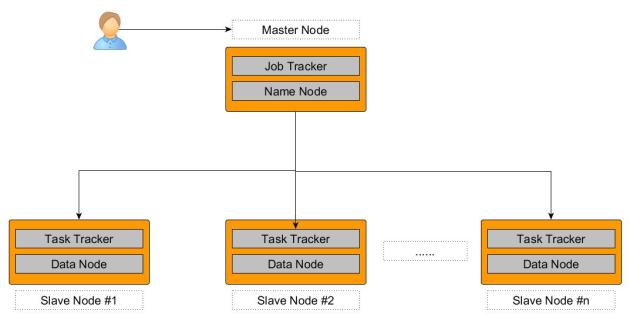

- On master node “jps” should show following

hduser@rpi3-0:~ $ jps 20421 ResourceManager 20526 NodeManager 19947 NameNode 20219 SecondaryNameNode 24555 Jps 20050 DataNode

- On Slave Node “jps” should show following processes

hduser@rpi3-3:/opt/hadoop-2.7.3/logs $ jps 2294 NodeManager 2159 DataNode 2411 Jps

- To verify the successful installation, run a hadoop and spark job in cluster mode and you will see the Application Master tracking URL.

- Run spark Job

- spark-submit –class com.learning.spark.SparkWordCount –master yarn –executor-memory 512m ~/word_count-0.0.1-SNAPSHOT.jar /ntallapa/word_count/text 2

- Run example mapreduce job

- hadoop jar /opt/hadoop-2.7.3/share/hadoop/mapreduce/hadoop-mapreduce-examples-2.7.3.jar wordcount /ntallapa/word_count/text /ntallapa/word_count/output

Recent Comments